Case Snapshot

- Company: Sand Studio (QA team)

- Industry: SaaS / Technology

- Use Case: Automated Test Recap & Release-Quality Reporting

- Key Outcome: 540 → 180 mins/month (-67%) authoring time

The Background

The company releases product updates frequently. Each release requires a clear, consistent test recap for stakeholders—QA leads, release owners, and engineering managers—to ensure informed sign-offs and effective post-release analysis.

Previously, the QA team handled these recaps manually. They had to gather release details, test submissions, progress notes, feedback, and defect statuses from multiple sources, then compile everything into a structured summary and log it in Jira for traceability.

The primary goal was to reduce repetitive manual work, standardize report quality, and accelerate release readiness and retrospectives—all without sacrificing necessary human oversight.

The Challenge

Fragmented data: Key inputs were scattered across internal "FollowUp" APIs (publish cards, tester steps, submission records, feedback) and Jira (defects, versions, fixVersions, statuses). Reconciling these manually was slow and prone to errors.

Inconsistent reporting: The quality and structure of reports varied depending on the author. This made it difficult to compare different versions and weakened the team's ability to build a reliable "quality knowledge base."

Review bottlenecks: Even after a draft was created, it required human validation. Without a clear follow-up mechanism, these confirmations often slipped through the cracks.

Complex versioning and mapping: With multiple Jira projects and naming conventions, the workflow needed reliable rules to map version prefixes and align custom fields correctly.

The Solution

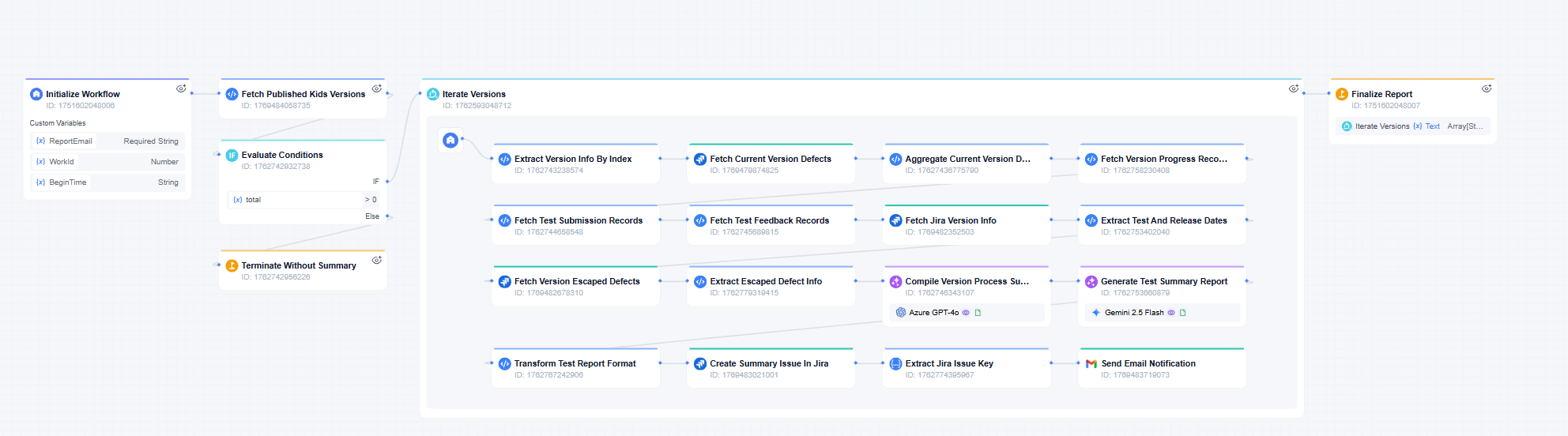

The team built the "Test Summary Auto-Generator" workflow on GoInsight.ai. It converts fragmented release signals into a structured recap through a pipeline designed for accuracy and accountability.

How the workflow works

Multi-Source Data AggregationThe workflow creates a unified release timeline by querying internal APIs for version lists and testing logs, while simultaneously fetching defect data (including post-release "escaped" defects) directly from Jira.

"Structure-First" Pre-processing (Critical Step)

Before engaging the LLM, the system uses deterministic rules to normalize timestamps, classify defect types (e.g., fixed vs. deferred), and compute statistics. This ensures the AI receives clean, unambiguous data, significantly reducing hallucinations and ensuring consistent reporting.

Template-Constrained AI Generation

Using the structured data, an LLM generates the narrative—covering scope, progress, defect analysis, and risks—strictly adhering to a standardized format to ensure every report looks the same.

Closed-Loop Governance

Archiving: The final report is automatically formatted and saved as a Jira issue to ensure long-term traceability.

Human Review: A monitoring bot tracks the review status. If the report is not confirmed within 3 days, the system sends daily reminders to the team until a human owner explicitly verifies it.

The Result

Quantitative outcomes

- 67% reduction in authoring time: Test summary writing dropped from 540 minutes/month to 180 minutes/month.

- Consistent throughput: The team maintains an average of ~4 reports per month, supporting frequent iterations without adding administrative overhead.

Qualitative improvements

- Standardized quality: Recaps are now consistent across all versions, making it easier to spot recurring patterns and compare releases.

- Better, faster decisions: Release owners get a consolidated view of defect status, deferred items, and escaped defects instantly.

- Stronger institutional memory: Every release generates a durable Jira artifact, building a searchable history for retrospectives and continuous improvement.

- Efficient governance: Critical items can still be flagged for manual confirmation, while automation handles the heavy lifting of data compilation.

By transforming a manual, fragmented process into an automated, governed workflow, the FlashGet Kids team has made test recaps faster to produce, easier to trust, and more valuable as a long-term asset. The result is a scalable model for release reporting: structure the data first, generate consistent summaries, archive in Jira, and verify through a reliable human review loop.

"Automating the test recap workflow has streamlined our process and significantly improved reporting efficiency."

Leave a Reply.