- What is SEO Content Analysis

- How to Analyze SEO Content

- Step 1: Analyze search intent from SERP

- Step 2: Evaluate keyword coverage

- Step 3: Assess content structure

- Step 4: Evaluate content quality and E-E-A-T

- Step 5: Check SEO compliance and optimization signals

- Where Manual SEO Article Analysis Fails

- Case Study: Automating SEO Content Analysis at Scale

- How AI Transforms Content Analysis for SEO

- LLM as the evaluation engine

- RAG for context-aware analysis

- LLM for structured data extraction

- Results at The Marketing Team

- What to Expect in the Future

- FAQs

Case Snapshot

- Company: Sand Studio

- Department: Marketing Team

- Industry: SaaS / Technology

- Use Case: Automate the process of SEO content analysis with LLMs

- Key Outcome: Reduce time spent on this task to almost zero from 2 hrs per article and improve the accuracy of analysis results

In today's search landscape, publishing content is no longer enough. What determines whether a page ranks is not just what you write, but how it aligns with search intent, keyword coverage, and content structure.

That's where SEO content analysis comes in. It enables teams to assess existing and new content, identifying gaps that can help achieve higher search engine rankings. However, as content production scales, the traditional and manual analysis method that once worked for a handful of articles no longer works when you're managing dozens, even hundreds of pages.

In this article, we'll dive into SEO content analysis, identify where manual methods break down, and how the marketing team at Sand Studio automates content analysis and scoring at scale with LLMs.

What is SEO Content Analysis

SEO content analysis is not an isolated process of text analysis, but a systematic process of evaluating the existing content of a website to determine its effectiveness in meeting both search engine requirements and user needs. In general, it dives deep into the quality, relevance, and semantic structure of the text.

In general, SEO content analysis should answer FIVE core questions:

- Search intent alignment: Does the content match what users are actually searching for?

- Keyword coverage: Does it cover primary, secondary, and long-tail keywords?

- Content structure: Does it have clear headings and hierarchy to ensure readability?

- Topic authority: Does the page provide enough depth and unique insight to be considered an expert resource (E-E-A-T)?

- Content depth and relevance: Does the page have "thin" content, such as outdated information, internal cannibalization, or incomplete information?

How to Analyze SEO Content

Effective SEO content analysis is not just about optimization of keywords. In fact, it's a systematic method that can align intent, keyword coverage, structure, and quality together. For most cases, you can follow the five steps below to audit content effectively:

Step 1: Analyze search intent from SERP

Before looking at your own content, examine the Search Engine Results Pages (SERP) first. There are three things you need to pay attention to in this step:

- The dominant content type, such as blog posts, product pages, or videos.

- Titles, headings, and formats across top results.

- SERP features, such as featured snippets and FAQs.

After that, you can have an understanding of whether your content meet what users are actually looking for.

Step 2: Evaluate keyword coverage

Check how well the page targets its main topic and if the primary keyword is placed strategically, and if the secondary, long-tail keywords are used to provide boarder context and capture related search traffic. Meanwhile, identify missing topics that fill gaps compared to top-ranking pages.

Step 3: Assess content structure

Review how the content is organized and whether it’s easy to scan and understand, including:

- Clear heading hierarchy of H1, H2, and H3 tags.

- Logical flow between sections.

- Use of lists, short paragraphs, and formatting.

A proper heading structure helps search engines understand the relationship between different sections and improve skimmability for readers.

Step 4: Evaluate content quality and E-E-A-T

Go beyond the technicalities to judge the actual value of text. To ensure the page satisfies E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) standards, you need to answer the questions below in this step:

- Does the content fully cover the topic and subtopics?

- Are claims supported and reliable?

- Does it provide clear and useful answers?

- Does it provide unique insights?

- Is the information up-to-date?

Please note that search engines always prioritize content that is comprehensive, accurate, and genuinely helpful.

Step 5: Check SEO compliance and optimization signals

Audit the on-page technical elements, including:

- Optimizing meta titles and descriptions for click-through rates (CTR).

- Ensuring images have descriptive alt text.

- Verifying internal links point to relevant and high-authority pages.

- Replace manual judgment with a unified system and evaluation standards.

- Increase the efficiency of giving feedback.

- Make content feedback structured and usable as a shared asset.

- Monitor and alarm low-quality content proactively.

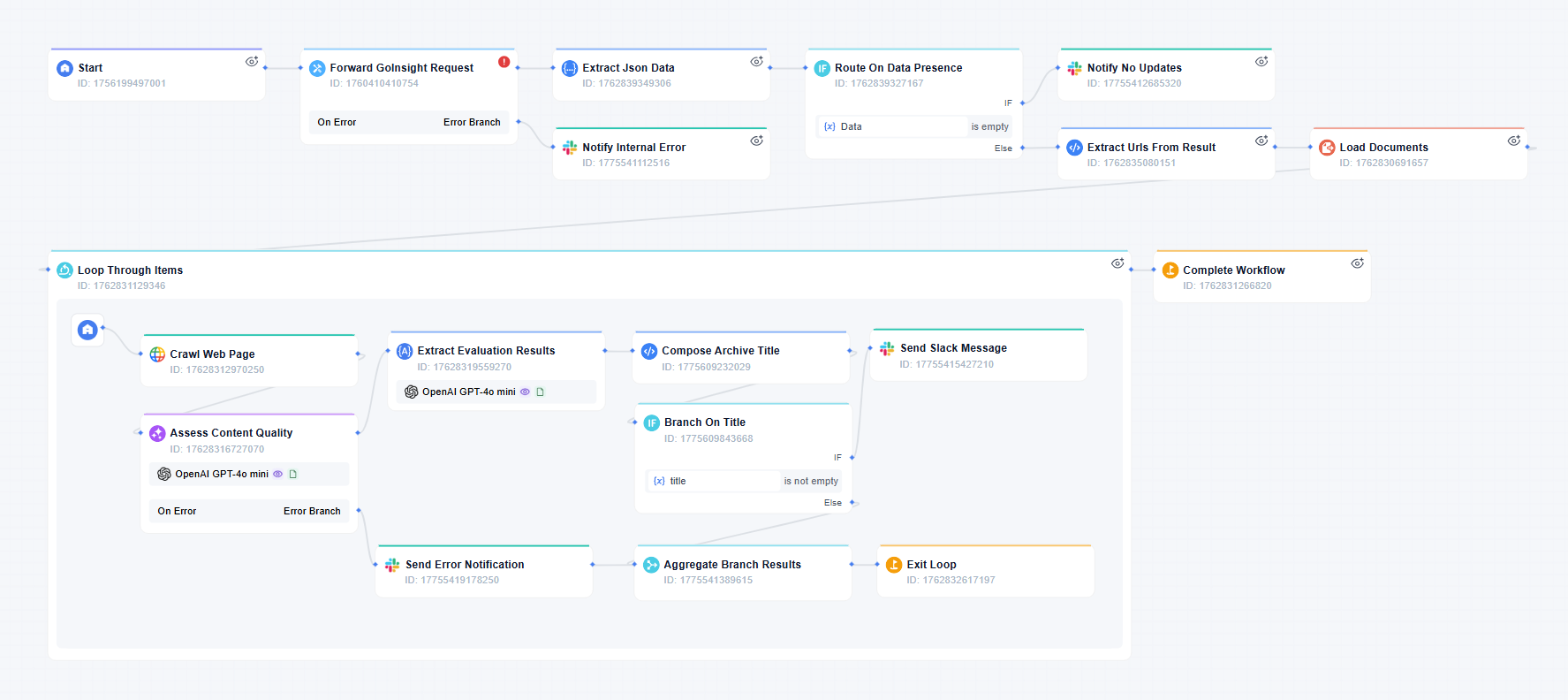

- The workflow scans the target website and picks up anything new.

- It reads through and summarizes the newly found articles with main ideas, structures, and key points.

- It then checks the article against a set of guidelines and judges whether the content meets quality standards.

- Based on the comparison, it gives each article a score and an evaluation result.

- The outputs drop into databases with clear ratings, EEAT results, and issues. From there, all evaluation results can be tracked, compared, and analyzed over time.

- Engineering teams: review code against internal coding guidelines and flag violations in tools like GitHub and GitLab.

- Customer support teams: audit support conversations based on SOPs, identify low-quality responses, and escalate them to supervisors.

- Legal teams: perform initial contract reviews using predefined risk criteria and detect potential issues.

Where Manual SEO Article Analysis Fails

Over the decades, the framework mentioned above has been proven effective with SEO content analysis and SEO audit. Follow these five steps, and you can generate high-quality output for a single article. However, as the scope of an audit grows, it brings a logistical bottleneck to maintain objectivity and details across platforms that contain thousands of URLs.

In practice, the marketing team at Sand Studio realizes a different set of challenges in the age of information explosion and AI, and the traditional method of content analysis hits a wall in efficiency, objectivity, and large-scale execution.

Repetitive manual tasks, delayed feedback

It’s almost too obvious that a number of factors can impact content performance, such as keyword targeting, search intent, writing quality, etc., but the proper content analysis methodology heavily relies on expert knowledge and experience. Meanwhile, it takes time to do well.

Senior members in the marketing team used to spend a lot of time checking tedious things, such as whether each header was properly formatted and whether the content contained both long-tail and short-tail keywords. All these tasks were done by hand and involved separating various tools. Although that effort usually paid off, manual evaluation may take hours or even a day, resulting in delayed response to low-quality content and belated content optimization. Especially when the number of articles published is huge, manual labour becomes a more significant bottleneck.

Biased analysis standards are fallible

“There is no foolproof method for SEO content analysis.” If you're familiar with SEO, you might agree with this idea. So does the marketing team at Sand Studio.

Even Google says in its Search Engine Optimization (SEO) Starter Guide, "There are no secrets here that'll automatically rank your site first in Google." It also suggests the golden rule is to let search engines crawl, index, and understand the content more easily.

However, in practice, our marketing team realized that different members might interpret and apply Google's guidelines in different ways, which often leads to inconsistent and subjective evaluations in content quality. This phenomenon is often ignored by many teams. But such inconsistency directly impacts how content is prioritized and optimized. Under its shadow, the team often struggles to make consistent decisions on what to update and how to build a repeatable SEO strategy.

SEO content insights don't scale

Another challenge that caught the team's attention was that insights seldom accumulated in a practical manner. This stemmed from data being fragmented across multiple tools and platforms.

When the marketing team reviewed the work process of SEO content analysis, it identified a list of tools involved. Content evaluations are distributed across SEO tools, spreadsheets, Slack threads, and even verbal feedback. Over time, it becomes difficult to trace why a piece was updated, what issues were identified, or whether similar problems have already been solved elsewhere.

The process debt compounds quickly as workflows grow more complex. Without a structured way to capture and reuse the data of content analysis, SEO decisions and strategies will become reactive rather than systematic.

Case Study: Automating SEO Content Analysis at Scale

The huge amount of time spent on manually SEO content analysis finally kicked off the team's journey into automation using GoInsight.AI. They built an automated SEO content analysis tool, Google Content Quality Evaluator, to scale and standardize SEO content analysis. The goals included:

The workflow is based on the existing fixed process of content analysis. With large language model (LLM) nodes, it extends itself into an expert in SEO content that can analyze and score content in specific fields according to a unified standard.

Here's how it works:

How AI Transforms Content Analysis for SEO

"LLM is the brain of the Google Content Quality Evaluator," said the marketing team. While manual content analysis typically breaks down in efficiency, objectivity, and large-scale execution, the team applies LLMs to the workflow to address these limitations by standardizing evaluation, reducing manual effort, and enabling structured outputs.

LLM as the evaluation engine

One of the most significant roles LLM plays in this workflow is a standardized evaluation. The team leverages System Prompt to direct LLM to access and incorporate the Google SEO Guide. Therefore, the evaluator can get relevant guidance rather than general knowledge and measure every page against a uniform set of criteria and reference framework, while the traditional method largely relied on human reviewers' intuition.

With LLM, the automation solution eliminates individual bias and ensures that content quality is assessed with consistency and objectivity across the entire site.

RAG for context-aware analysis

To replace the "research grind" of manual SEO auditing, the team integrate Retrieval-Augmented Generation (RAG) for LLM to access proprietary knowledge bases before it begins the analysis. The RAG layer in this SEO content analyzer, instead of relying on general training data, acts as a digital reference desk. For every page audited, the workflow can automatically retrieves the most relevant internal rules and domain-specific context and feed them into the LLM's reasoning loop.

The adoption of RAG minimizes repetitive research work, such as the cross-referencing of different documents, and drastically reduces the cognitive load on the team.

LLM for structured data extraction

The first LLM node above outputs detailed reports on content scoring and rating, but these results are written in natural language. To move the analysis from insights to actionable data, the marketing team uses a specific LLM node to parse complex and unstructured reports into structured formats for classification and archiving.

The LLM node can extract specific and high-value signals, such as rating and E-E-A-T scores, from the evaluation report and organize them into target databases for further content optimization. This transforms SEO content audit into a production-ready data pipeline for downstream automation.

The approach of LLM calls makes this workflow execute content analysis as a closed loop, also making execution at an enterprise scale a reality.

"It's a remarkable adoption of LLM and workflow automation, because it fully automates and standardizes a complex cognitive task that relies on expert experience through a closed loop."

Results at The Marketing Team

Nowadays, Google Content Quality Evaluator automatically checks content on target websites and gives results daily. Meanwhile, it has been published for all members. Anyone can trigger it via the “@” action in chat to check content quality. Both make it an essential tool for the team.

From efficiency and consistency in evaluation to feedback speed, to turning data into a team asset, it becomes a game-changer in content analysis as well.

| Metric | Before | After |

|---|---|---|

| Experience required | SEO specialist level | Anyone on the team |

| Analysis result consistency | Likely to be subjective | Standardized and objective follow the same guidelines |

| Time consuming | 1-2 hours per article | Near zero |

| Feedback speed | Hours, even a full day | Almost instant feedback |

| Scalability | Limited capacity | Scalable processing |

| Data Management | Hard, fragmented data | Easy and traceable, structured datasets |

| Risk Control | Low-quality content may remain live for extended periods before being identified | Continuous monitoring and alerts enable teams to quickly identify and modify low-ranking content |

| Organizational asset | Difficult with manual effort | Automated by LLM |

What to Expect in the Future

The success of this SEO content analyzer is only the beginning. While the marketing team has already unlocked significant value, they are still working on improving it. Their next goal is not only to improve the accuracy of content analysis but also to scale this modular architecture across the entire organization.

From general SEO to domain-specific authority

For now, the knowledge base is grounded in official SEO guidelines from Google, which LLMs use to retrieve information while analyzing content quality. The results it generates can meet general requirements in SEO rankings. But to engage competitive content that is more applicable to the actual product itself? It still needs customization in terms of knowledge.

The team plans to expand the RAG layer to include proprietary product manuals, internal subject matter expert transcripts, and customer data point data, adjust the scope of knowledge, and apply other nodes to improve the accuracy of content analysis.

A modular framework for cross-functional success

As each component in the workflow can be treated as a plug-and-play module, it can be extended to new scenarios without rebuilding the system from scratch, and allows other teams to adopt the abilities of AI and LLM to automate tasks, including:

Bring your team, systems, and AI into one place—then turn ideas into governed work.

Boost Your SEO Content Analysis Now

Leave a Reply.