Case Snapshot

- Company: Sand Studio (Recruiting Team)

- Industry: SaaS / Technology

- Use Case: Automatically generated, role-specific interview questions that are deep, structured, and fast.

- Key Outcome: 30+ min → <5 min per candidate

The Background

Hiring teams often agree on one thing: interviews are only as good as the preparation behind them. After resumes are screened, interviewers still need to turn a job description (JD) and a candidate's resume into questions that test real fit—skills, problem-solving, impact, and credibility.

In this case, a recruiting team wanted a faster, more consistent way to prepare interviews—without losing depth. They also wanted question sets that work across different interview roles: the business/technical interviewer, the department lead, and HR.

The Challenge

Interview preparation was taking too long and often lacked consistency. Interviewers spent 30+ minutes per candidate manually drafting questions, and the results depended heavily on individual experience. Important areas (technical depth, project ownership, soft skills, or culture fit) could be missed. When resumes contained vague claims or polished wording, it was hard to quickly craft strong follow-ups that test authenticity and real contribution.

The Solution

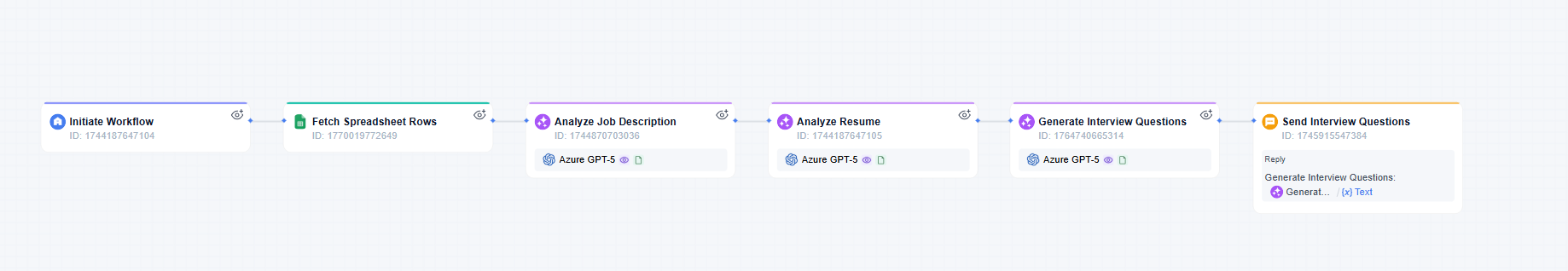

The team built a GoInsight.AI workflow that connects candidate resumes with role-specific JDs and automatically generates an interview question set that is practical and easy to use.

To ensure quality and minimize filler content, the workflow incorporates several guardrails in question design:

- Role-based routing: Questions are customized for each interview stage and interviewer role, avoiding one-size-fits-all approaches.

- Depth-first follow-ups: Each question includes its evaluation purpose and suggested probing questions to help interviewers delve deeper than surface responses.

- STAR-style prompting: Candidates are encouraged to answer using the Situation–Task–Action–Result format, making responses easier to verify.

- Output checks: Questions must reference specific details from the candidate's resume, preventing generic templates from being mistaken for personalized content.

Key features that made it successful:

- Structured, repeatable output (not one-off chats): The workflow consistently produces question sets covering tech skills, project depth, soft skills, and strategic thinking.

- Smart routing for true personalization: A natural-language classifier sorts candidates by role type (technical, operations, corporate) and distinguishes first-round (execution) from second-round (strategy) questions.

- Built-in quality controls: STAR-method guidelines, a "spoken style" rewrite step, and placeholder validation prevent generic questions and ensure follow-ups are resume-specific.

The Result

Efficiency gain: Interview preparation time per candidate dropped from 30+ minutes to under 5 minutes, within the recruiting team's standard interview preparation process (per candidate scope).

Quality improvements:

- Better coverage across skills, project experience, soft skills, and role-level expectations.

- Deeper verification through resume-based probing questions, improving authenticity checks.

- More consistent interview standards, reducing variance between interviewers and across roles.

Why this matters (and who it fits best)

This case shows where GoInsight.AI is strongest: turning AI from "helpful writing" into a repeatable team capability—built once by a few Builders and used by many Operators in a standard workflow.

It is a strong fit for organizations that:

- run high-volume or high-stakes hiring,

- want consistent interview quality across teams,

- need role-based interview structures (Hiring Manager + Department Lead + HR),

- and want interview preparation to be collaborative, fast, and auditable.

If your hiring process depends on structured evaluation and repeatability—especially across multiple roles, teams, or clients—this approach is a practical way to scale interview quality without scaling preparation time.

Leave a Reply.