Highlights

- • Reducing bug evaluation time from 30 minutes to just 2 minutes per case

- • Cutting ineffective communication during bug disputes by over 40%

- • Automating technical analysis to bridge the gap between QA and Developers

Overview

Bug triaging often causes friction in fast-paced R&D teams. When developers mark a bug as "Won't Fix," testers usually lack the code-level insight to argue back. This leads to subjective debates and ignored risks. To stop the guessing games, Sand Studio's R&D team built a workflow that brings objective, technical facts into the conversation.

The challenge

Before implementing AI assisted triage, the R&D team faced three main bottlenecks:

Endless Debates: Testers could not easily challenge complex developer explanations, leading to high communication costs and frustration.

Hidden Technical Details: "Won't Fix" reasons were often buried in jargon. Testers could not tell if a fix was truly blocked by the architecture or simply avoided.

Wasted Time on Research: To appeal a decision, testers had to manually dig through old Jira tickets and code commits, burning hours of productive time.

The solution: AI-assisted triage

The team created an automated workflow in GoInsight.AI to act as a technical referee. It reads the full context of a bug and provides a clear, data-backed report so both sides can see the facts.

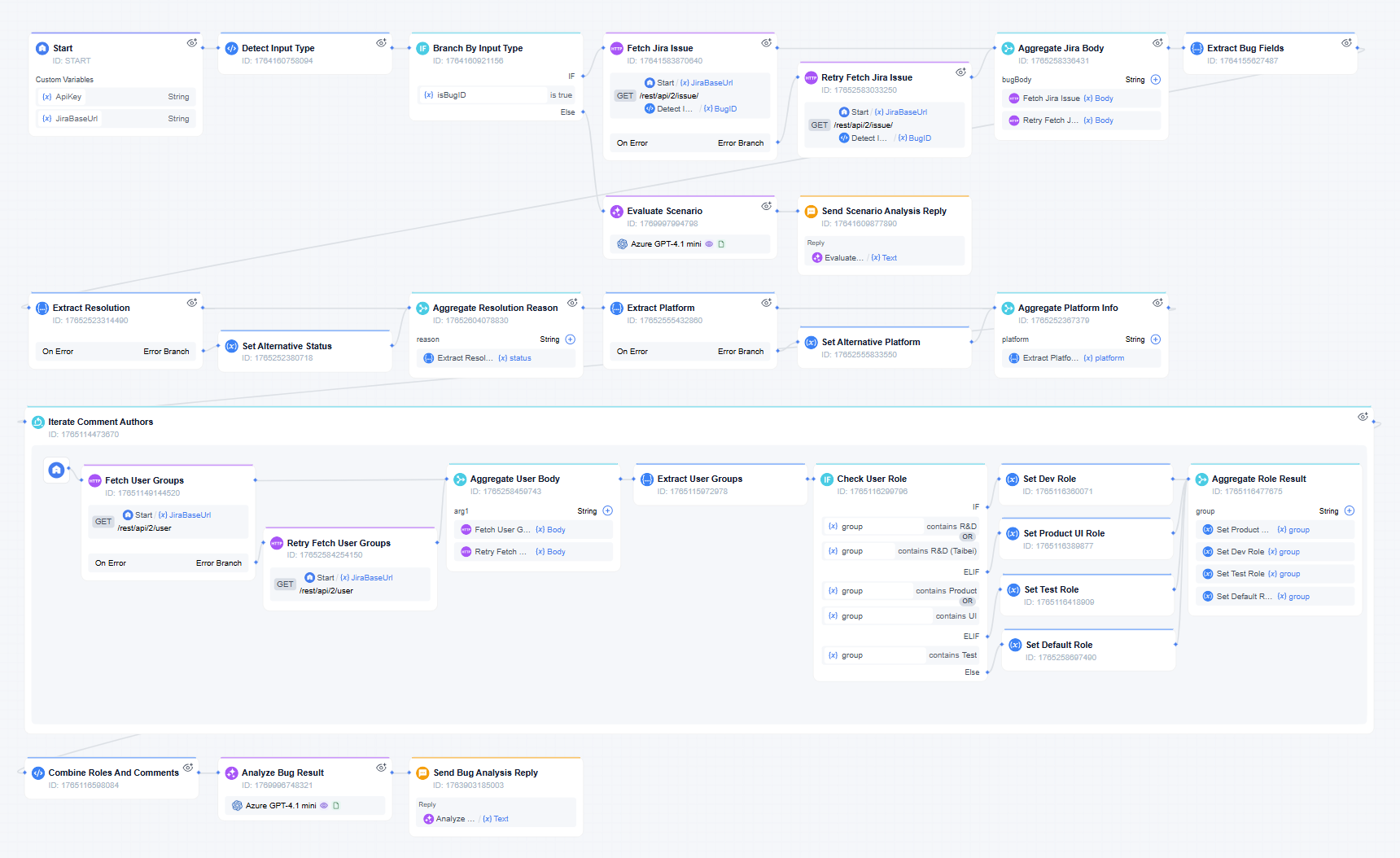

How the workflow works

- Pulling the Real Story

- The workflow uses a Jira API node to grab the full truth—pulling descriptions, priorities, and every historical comment, not just the title.

- Identifying the Speakers

- The system loops through comments to check user groups. It separates what the "Developer" said from what the "QA" said.

- Spotting the Conflict

- A Python script formats the messy Jira thread into a clear conversation. The AI reads this to figure out exactly where the tester and developer disagree.

- Delivering the Verdict

- The AI generates a clear report. Testers immediately know whether to accept the developer's reasoning or push back with facts.

The star feature: Smart Identity Recognition

The core strength of this bug triaging workflow is the Identity Recognition Loop. Instead of treating all text the same, this workflow checks the user group of every participant. Because the AI knows who is a developer and who is a tester, it understands the difference between a "technical pushback" and a "user impact" argument. This makes the analysis much smarter.

The impact

95% faster decisions

Bug evaluations dropped from 30 minutes to just 2 minutes. The team processes disputed tickets instantly without the endless back-and-forth.

Stopping the arguments

By giving everyone the same objective report, ineffective communication dropped by 40%. QA and Developers now have a shared baseline, ending "gut-feeling" arguments.

Better software quality

High-risk bugs are no longer ignored because of technical jargon. The transparency of AI assisted triage ensures that user-facing issues actually get fixed.

What's next

The R&D team plans to connect this workflow to their code repositories to find the root causes of bugs even faster. This will save senior experts even more time during manual reviews.

"It acts like a technical arbitrator. Testers no longer have to argue based on feeling—they use professional reports to drive the conversation. It has ended the bug dispute wars."

Bring your team, systems, and AI into one place—then turn ideas into governed work.

Start Free Trial

Leave a Reply.